Accurate measurement of mechanical strain and stress in engineering applications relies heavily on proper calibration procedures for strain gauge systems. A strain gauge serves as a critical sensor that converts mechanical deformation into electrical signals, enabling precise monitoring of structural integrity and material behavior under various loading conditions. The calibration process ensures that these sensitive instruments provide reliable, repeatable measurements essential for quality control, safety assessments, and performance optimization across industries ranging from aerospace to civil engineering.

Understanding the fundamental principles behind strain gauge operation forms the foundation for effective calibration practices. These precision instruments operate on the principle that electrical resistance changes proportionally to mechanical strain applied to the sensing element. When properly calibrated, a strain gauge system can detect minute deformations measured in microstrains, making them invaluable for high-precision testing applications where accuracy and reliability are paramount.

Fundamental Principles of Strain Gauge Technology

Basic Operating Mechanisms

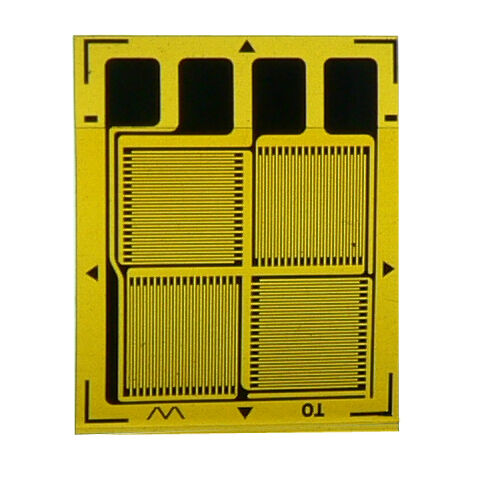

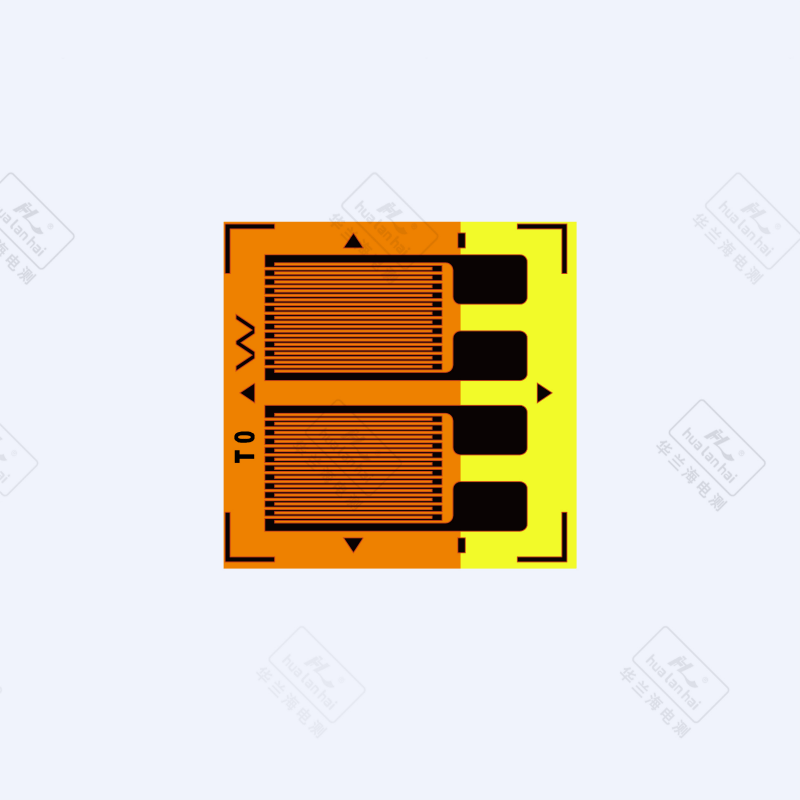

The core functionality of any strain gauge depends on the piezoresistive effect, where mechanical deformation directly influences the electrical resistance of the sensing element. This phenomenon occurs when stress applied to the gauge material causes changes in both the geometry and resistivity of the conductor. Modern strain gauge designs utilize various materials including metallic foils, semiconductor elements, and advanced composite materials to achieve optimal sensitivity and temperature stability.

Temperature compensation represents a critical aspect of strain gauge operation, as thermal expansion and contraction can introduce significant measurement errors if not properly addressed. Self-temperature-compensated gauges incorporate materials with specific thermal characteristics that automatically adjust for temperature variations within defined operating ranges. Understanding these compensation mechanisms is essential for establishing accurate calibration procedures and maintaining measurement integrity throughout the testing process.

Electrical Configuration and Signal Conditioning

Strain gauge installations typically employ Wheatstone bridge configurations to maximize signal output and minimize common-mode noise interference. Quarter-bridge, half-bridge, and full-bridge arrangements each offer distinct advantages depending on the specific application requirements and measurement objectives. The bridge configuration directly impacts the calibration approach, as different arrangements require unique compensation strategies for temperature effects and mechanical loading conditions.

Signal conditioning equipment plays a crucial role in converting the small resistance changes produced by the strain gauge into measurable voltage or current signals. High-quality amplifiers, filters, and analog-to-digital converters must be properly calibrated alongside the strain gauge itself to ensure accurate data acquisition. The entire measurement chain, from the sensing element through the signal conditioning system, requires systematic calibration to achieve the precision demanded by modern testing applications.

Pre-Calibration Preparation and Setup

Equipment Requirements and Environmental Controls

Successful strain gauge calibration begins with establishing a controlled testing environment that minimizes external influences on measurement accuracy. Temperature stability within ±1°C is typically required, along with adequate vibration isolation and electromagnetic shielding to prevent interference with sensitive electrical measurements. The calibration facility should maintain consistent humidity levels and provide clean, dust-free conditions to protect both the strain gauge and associated instrumentation.

Precision reference standards form the backbone of any reliable calibration process. Deadweight calibrators, hydraulic loading systems, or mechanical testing machines capable of applying known forces or displacements serve as primary references for establishing traceability to national measurement standards. These reference devices must themselves be regularly calibrated and maintained to ensure continued accuracy throughout the calibration process.

Initial Inspection and Documentation

Before beginning the calibration procedure, thorough visual inspection of the strain gauge installation is essential to identify any potential issues that could affect measurement accuracy. Proper adhesive bonding, appropriate lead wire routing, and adequate moisture protection should be verified. Any signs of damage, contamination, or improper installation must be addressed before proceeding with calibration activities.

Complete documentation of the strain gauge specifications, installation details, and environmental conditions provides essential information for establishing appropriate calibration parameters. This documentation should include gauge factor values, temperature coefficient data, resistance specifications, and any special handling requirements provided by the manufacturer. Maintaining detailed records throughout the calibration process enables traceability and facilitates future recalibration activities.

Calibration Methodology and Procedures

Static Calibration Techniques

Static calibration involves applying known loads or displacements to the strain gauge while recording the corresponding electrical output signals. This process typically begins with establishing a zero-load baseline measurement, followed by incremental loading steps that span the intended measurement range. Each load increment should be maintained for sufficient time to allow thermal equilibration and signal stabilization before recording data points.

The loading sequence for strain gauge calibration typically includes both ascending and descending load cycles to evaluate hysteresis characteristics and repeatability. Multiple calibration cycles help identify any drift or instability issues that could affect long-term measurement accuracy. Statistical analysis of the calibration data provides confidence intervals and uncertainty estimates that are essential for establishing measurement traceability.

Dynamic Calibration Considerations

Dynamic calibration addresses the frequency response characteristics of the strain gauge system, ensuring accurate measurements under varying loading conditions. This process involves applying sinusoidal or step-function inputs across the frequency range of interest while monitoring both amplitude and phase response characteristics. Dynamic calibration is particularly important for applications involving vibration analysis, impact testing, or other time-varying phenomena.

Specialized equipment such as electrodynamic shakers or pneumatic actuators may be required to generate the controlled dynamic inputs necessary for frequency response characterization. The calibration process must account for the mechanical properties of the test structure, mounting hardware, and any coupling devices used to transfer loads to the strain gauge. Dynamic calibration results are typically presented as frequency response functions that define the system behavior across the operational bandwidth.

Data Analysis and Calibration Factor Determination

Statistical Analysis Methods

Proper analysis of calibration data requires statistical methods that account for measurement uncertainty and provide reliable estimates of calibration coefficients. Linear regression analysis is commonly employed to establish the relationship between applied loads and strain gauge output signals. The slope of this relationship defines the calibration factor, while correlation coefficients and residual analysis provide measures of linearity and data quality.

Uncertainty analysis forms a critical component of the calibration process, quantifying the various sources of error that contribute to overall measurement uncertainty. Type A uncertainties arise from statistical variations in repeated measurements, while Type B uncertainties result from systematic effects such as reference standard accuracy, environmental conditions, and instrumentation limitations. Combined uncertainty calculations follow established guidelines such as those provided in the Guide to the Expression of Uncertainty in Measurement.

Calibration Certificate Generation

The calibration certificate documents the results of the calibration process and provides essential information for users of the strain gauge system. This document should include calibration factors, uncertainty estimates, environmental conditions, reference standards used, and the validity period for the calibration. Clear presentation of this information ensures proper interpretation and application of the calibration results.

Traceability statements in the calibration certificate establish the connection between the strain gauge calibration and national or international measurement standards. This traceability chain demonstrates that the calibration has been performed using appropriately calibrated reference standards and follows recognized procedures. Regular participation in proficiency testing programs or measurement comparison exercises further validates the quality and reliability of the calibration process.

Quality Assurance and Validation

Verification Procedures

Independent verification of strain gauge calibration results provides additional confidence in measurement accuracy and helps identify any systematic errors in the calibration process. Verification may involve cross-checking results using alternative measurement methods, comparing with historical calibration data, or conducting inter-laboratory comparisons. These activities are particularly important for critical applications where measurement errors could have significant safety or economic consequences.

Regular monitoring of strain gauge performance through check standards or control charts enables early detection of drift or degradation that could affect measurement accuracy. Implementing statistical process control methods helps maintain consistent calibration quality and provides objective evidence of process stability. Any trends or unusual variations identified through monitoring activities should trigger investigation and corrective action as appropriate.

Maintenance and Recalibration Scheduling

Establishing appropriate recalibration intervals balances measurement accuracy requirements with practical considerations such as cost and system availability. Factors influencing recalibration frequency include the stability characteristics of the strain gauge, environmental conditions, usage patterns, and the criticality of the measurements. Many applications benefit from risk-based approaches that adjust calibration intervals based on historical performance data and measurement requirements.

Preventive maintenance activities support reliable strain gauge operation and extend calibration intervals where appropriate. Regular cleaning of electrical connections, inspection of protective coatings, and verification of mounting integrity help prevent premature failure or drift. Maintaining detailed maintenance records facilitates trend analysis and supports optimization of both maintenance and calibration schedules.

Troubleshooting Common Calibration Issues

Electrical Problems and Solutions

Electrical issues represent some of the most common problems encountered during strain gauge calibration procedures. Insulation resistance degradation, often caused by moisture infiltration or contamination, can introduce significant measurement errors and compromise calibration accuracy. Regular insulation resistance testing using appropriate test voltages helps identify these issues before they affect calibration results. Proper sealing and protective coatings are essential for preventing moisture-related problems in challenging environments.

Signal noise and interference can significantly impact the quality of calibration measurements, particularly when dealing with small signals typical of strain gauge applications. Sources of interference include electromagnetic fields, ground loops, and mechanical vibration transmitted through the mounting structure. Systematic troubleshooting approaches involving signal filtering, shielding improvements, and grounding modifications often resolve these issues and improve overall measurement quality.

Mechanical Installation Challenges

Improper mechanical installation frequently leads to calibration difficulties and poor measurement performance. Incomplete adhesive bonding between the strain gauge and test surface can cause non-linear behavior and reduced sensitivity. Visual inspection techniques, combined with tap testing or acoustic methods, help identify bonding defects that require repair or reinstallation. Proper surface preparation and adhesive selection are critical factors in preventing these issues.

Thermal expansion mismatches between the strain gauge and test structure can introduce significant errors, particularly in applications involving temperature variations. Selecting gauges with appropriate temperature compensation characteristics and understanding the thermal properties of the test material are essential for minimizing these effects. In some cases, active temperature compensation using additional sensors may be necessary to achieve required accuracy levels.

Advanced Calibration Techniques

Multi-Point Calibration Strategies

Advanced applications often require sophisticated calibration approaches that go beyond simple linear relationships between load and strain gauge output. Multi-point calibration procedures establish detailed characterizations of system behavior across the full operational range, including non-linear regions and transition zones. These comprehensive calibrations provide improved accuracy for applications involving large strains or complex loading patterns.

Polynomial curve fitting and other advanced mathematical models may be employed to describe complex strain gauge behavior more accurately than simple linear relationships. However, the increased complexity of these models must be balanced against practical considerations such as computation requirements and user understanding. Validation of complex calibration models through independent measurements or alternative methods provides confidence in their accuracy and applicability.

Temperature Compensation Optimization

Sophisticated temperature compensation techniques can significantly improve strain gauge accuracy in applications involving wide temperature ranges or rapid thermal transients. These methods may involve multiple temperature sensors, real-time correction algorithms, or specialized gauge configurations designed for enhanced thermal stability. Implementation of advanced compensation requires careful consideration of the additional complexity and potential failure modes introduced.

Thermal calibration procedures characterize the temperature response of strain gauge systems across the intended operating range. These calibrations typically involve controlled heating and cooling cycles while monitoring both temperature and strain gauge output. The resulting data enables development of correction algorithms that account for thermal effects during actual measurements. Regular thermal recalibration may be necessary to maintain accuracy as system components age or environmental conditions change.

Industry Applications and Standards Compliance

Aerospace and Defense Requirements

Aerospace applications demand the highest levels of strain gauge calibration accuracy and reliability due to safety-critical nature of the measurements. Industry standards such as those developed by the Society of Automotive Engineers and the American Institute of Aeronautics and Astronautics provide detailed requirements for calibration procedures, documentation, and quality assurance. Compliance with these standards often requires specialized equipment, personnel qualifications, and extensive documentation systems.

Defense applications frequently involve additional requirements for security, traceability, and configuration control that impact strain gauge calibration procedures. These requirements may include restrictions on personnel access, special handling procedures for sensitive information, and enhanced documentation controls. Understanding and implementing these requirements is essential for organizations serving defense markets.

Civil Engineering and Infrastructure Monitoring

Civil engineering applications of strain gauge technology focus on long-term monitoring of infrastructure health and safety. Calibration procedures for these applications must address extended service life requirements, environmental exposure effects, and the need for stable measurements over periods measured in years or decades. Specialized installation techniques and protection systems are often required to ensure reliable operation in harsh outdoor environments.

Bridge monitoring, building health assessment, and geotechnical applications each present unique calibration challenges related to scale, accessibility, and environmental conditions. Remote calibration capabilities and wireless data transmission systems are increasingly important for these applications. Calibration procedures must account for the practical limitations imposed by installed systems while maintaining required accuracy levels.

FAQ

What factors affect strain gauge calibration accuracy?

Multiple factors influence strain gauge calibration accuracy, including temperature variations, mechanical loading conditions, electrical interference, and the quality of reference standards used. Environmental conditions such as humidity, vibration, and electromagnetic fields can introduce measurement errors if not properly controlled. The mechanical installation quality, including adhesive bonding and surface preparation, directly impacts the strain transfer characteristics and overall accuracy. Additionally, the stability and resolution of signal conditioning equipment play crucial roles in determining the achievable calibration accuracy.

How often should strain gauges be recalibrated?

Recalibration frequency depends on several factors including the criticality of measurements, environmental conditions, gauge stability characteristics, and regulatory requirements. For critical safety applications, annual calibration is often required, while less demanding applications may allow longer intervals based on demonstrated stability. Factors such as exposure to harsh environments, thermal cycling, mechanical shock, or chemical contamination may necessitate more frequent calibration. Historical performance data and trending analysis can help optimize recalibration intervals while maintaining required accuracy levels.

Can strain gauge calibration be performed in-situ?

In-situ calibration is possible for many strain gauge applications, though it requires careful consideration of the available reference loads and environmental conditions. Portable calibration equipment such as hydraulic jacks or mechanical loading devices can provide known reference forces for field calibration activities. However, the accuracy of in-situ calibration may be limited by environmental factors and the precision of portable equipment. Laboratory calibration generally provides higher accuracy, but in-situ methods offer practical advantages for installed systems that cannot be easily removed.

What documentation is required for strain gauge calibration?

Comprehensive documentation for strain gauge calibration includes gauge specifications, installation details, environmental conditions, reference standards used, measurement data, uncertainty analysis, and calibration certificates. The documentation should establish traceability to national measurement standards and include information about personnel qualifications and procedures followed. Quality management systems often require additional documentation such as calibration procedures, equipment maintenance records, and proficiency testing results. Proper documentation enables measurement traceability, supports regulatory compliance, and facilitates future calibration activities.

Table of Contents

- Fundamental Principles of Strain Gauge Technology

- Pre-Calibration Preparation and Setup

- Calibration Methodology and Procedures

- Data Analysis and Calibration Factor Determination

- Quality Assurance and Validation

- Troubleshooting Common Calibration Issues

- Advanced Calibration Techniques

- Industry Applications and Standards Compliance

- FAQ